Linux Server integration for Grafana Cloud

Linux is a family of open-source Unix-like operating systems based on the Linux kernel. Linux is the leading operating system on servers, and is one of the most prominent examples of free and open-source software collaboration.

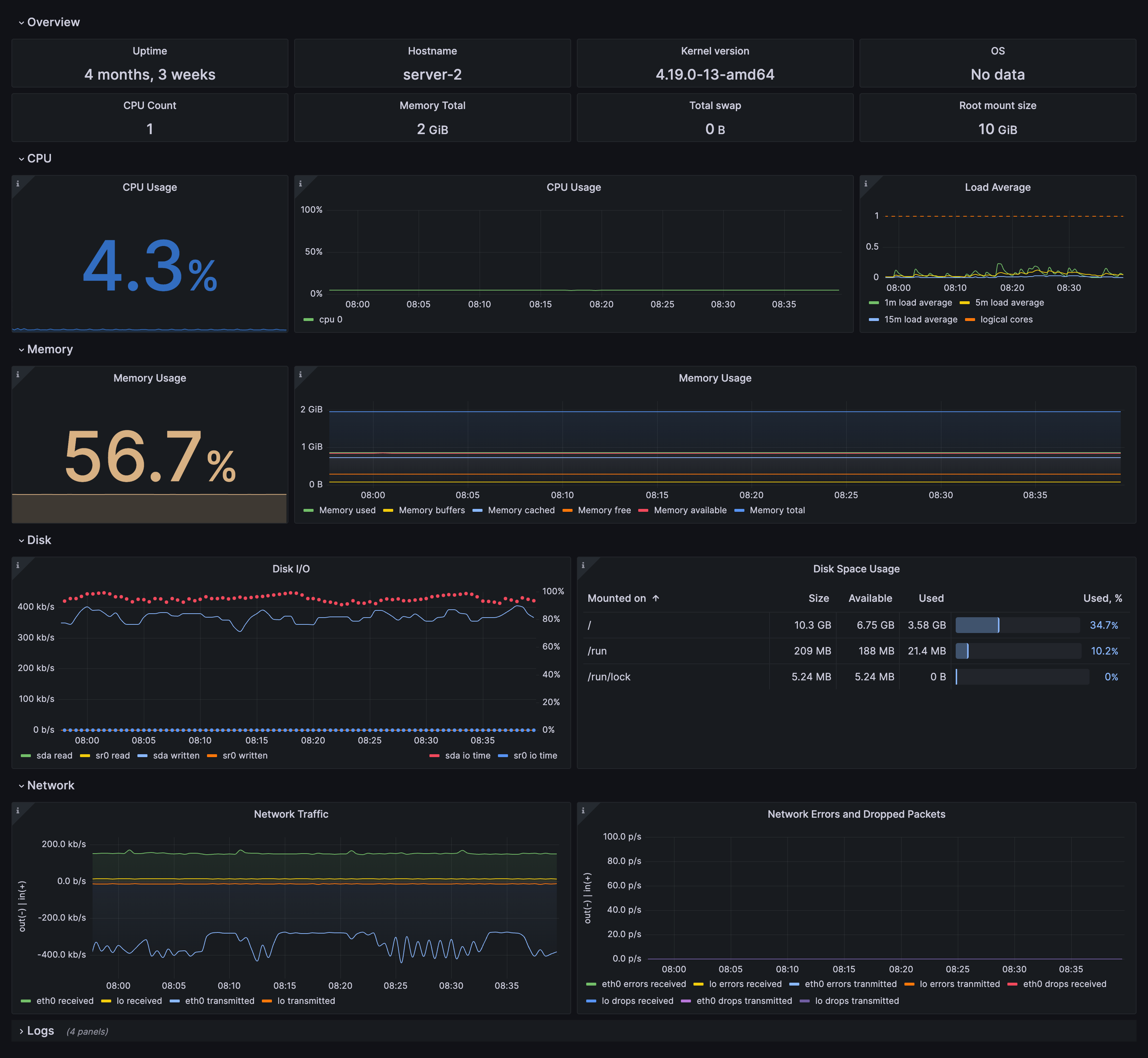

Linux Server integration for Grafana Cloud enables you to collect metrics related to the operating system running on a node, including aspects like CPU usage, load average, memory usage, and disk and networking I/O using node_exporter integration. It also allows you to use the agent to scrape logs.

This integration includes 24 useful alerts and 7 pre-built dashboards to help monitor and visualize Linux Server metrics and logs.

Before you begin

Each Linux node being observed must have its dedicated Grafana Alloy running.

If you want to monitor more than one Linux Node with this integration, we recommend you to use the Ansible collection for Grafana Cloud to deploy Grafana Alloy to multiple machines, as described in this documentation.

Install Linux Server integration for Grafana Cloud

- In your Grafana Cloud stack, click Connections in the left-hand menu.

- Find Linux Server and click its tile to open the integration.

- Review the prerequisites in the Configuration Details tab and set up Grafana Agent to send Linux Server metrics and logs to your Grafana Cloud instance.

- Click Install to add this integration’s pre-built dashboards and alerts to your Grafana Cloud instance, and you can start monitoring your Linux Server setup.

Configuration snippets for Grafana Alloy

Simple mode

These snippets are configured to scrape a single Linux Server instance running locally with default ports.

Manually copy and append the following snippets into your Grafana Alloy configuration file.

Integrations snippets

discovery.relabel "integrations_node_exporter" {

targets = prometheus.exporter.unix.integrations_node_exporter.targets

rule {

target_label = "instance"

replacement = constants.hostname

}

rule {

target_label = "job"

replacement = "integrations/node_exporter"

}

}

prometheus.exporter.unix "integrations_node_exporter" {

disable_collectors = ["ipvs", "btrfs", "infiniband", "xfs", "zfs"]

filesystem {

fs_types_exclude = "^(autofs|binfmt_misc|bpf|cgroup2?|configfs|debugfs|devpts|devtmpfs|tmpfs|fusectl|hugetlbfs|iso9660|mqueue|nsfs|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|selinuxfs|squashfs|sysfs|tracefs)$"

mount_points_exclude = "^/(dev|proc|run/credentials/.+|sys|var/lib/docker/.+)($|/)"

mount_timeout = "5s"

}

netclass {

ignored_devices = "^(veth.*|cali.*|[a-f0-9]{15})$"

}

netdev {

device_exclude = "^(veth.*|cali.*|[a-f0-9]{15})$"

}

}

prometheus.scrape "integrations_node_exporter" {

targets = discovery.relabel.integrations_node_exporter.output

forward_to = [prometheus.relabel.integrations_node_exporter.receiver]

}

prometheus.relabel "integrations_node_exporter" {

forward_to = [prometheus.remote_write.metrics_service.receiver]

rule {

source_labels = ["__name__"]

regex = "node_scrape_collector_.+"

action = "drop"

}

}Logs snippets

linux

loki.source.journal "logs_integrations_integrations_node_exporter_journal_scrape" {

max_age = "24h0m0s"

relabel_rules = discovery.relabel.logs_integrations_integrations_node_exporter_journal_scrape.rules

forward_to = [loki.write.grafana_cloud_loki.receiver]

}

local.file_match "logs_integrations_integrations_node_exporter_direct_scrape" {

path_targets = [{

__address__ = "localhost",

__path__ = "/var/log/{syslog,messages,*.log}",

instance = constants.hostname,

job = "integrations/node_exporter",

}]

}

discovery.relabel "logs_integrations_integrations_node_exporter_journal_scrape" {

targets = []

rule {

source_labels = ["__journal__systemd_unit"]

target_label = "unit"

}

rule {

source_labels = ["__journal__boot_id"]

target_label = "boot_id"

}

rule {

source_labels = ["__journal__transport"]

target_label = "transport"

}

rule {

source_labels = ["__journal_priority_keyword"]

target_label = "level"

}

}

loki.source.file "logs_integrations_integrations_node_exporter_direct_scrape" {

targets = local.file_match.logs_integrations_integrations_node_exporter_direct_scrape.targets

forward_to = [loki.write.grafana_cloud_loki.receiver]

}Advanced mode

To instruct Grafana Alloy to scrape your Linux Server instance, go though the subsequent instructions.

The snippets provide examples to guide you through the configuration process.

First, Manually copy and append the following snippets into your Grafana Alloy configuration file.

Then follow the instructions below to modify the necessary variables.

Advanced integrations snippets

discovery.relabel "integrations_node_exporter" {

targets = prometheus.exporter.unix.integrations_node_exporter.targets

rule {

target_label = "instance"

replacement = constants.hostname

}

rule {

target_label = "job"

replacement = "integrations/node_exporter"

}

}

prometheus.exporter.unix "integrations_node_exporter" {

disable_collectors = ["ipvs", "btrfs", "infiniband", "xfs", "zfs"]

filesystem {

fs_types_exclude = "^(autofs|binfmt_misc|bpf|cgroup2?|configfs|debugfs|devpts|devtmpfs|tmpfs|fusectl|hugetlbfs|iso9660|mqueue|nsfs|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|selinuxfs|squashfs|sysfs|tracefs)$"

mount_points_exclude = "^/(dev|proc|run/credentials/.+|sys|var/lib/docker/.+)($|/)"

mount_timeout = "5s"

}

netclass {

ignored_devices = "^(veth.*|cali.*|[a-f0-9]{15})$"

}

netdev {

device_exclude = "^(veth.*|cali.*|[a-f0-9]{15})$"

}

}

prometheus.scrape "integrations_node_exporter" {

targets = discovery.relabel.integrations_node_exporter.output

forward_to = [prometheus.relabel.integrations_node_exporter.receiver]

}

prometheus.relabel "integrations_node_exporter" {

forward_to = [prometheus.remote_write.metrics_service.receiver]

rule {

source_labels = ["__name__"]

regex = "node_scrape_collector_.+"

action = "drop"

}

}This integration uses the prometheus.exporter.unix component to collect system metrics.

The supplied configuration is tuned to exclude any metrics from the exporter which are not used by the integration’s dashboards, alerts, or recording rules. If a broader configuration which includes additional metrics is desired, the prometheus.exporter.unix component can be adjusted accordingly.

Advanced logs snippets

linux

loki.source.journal "logs_integrations_integrations_node_exporter_journal_scrape" {

max_age = "24h0m0s"

relabel_rules = discovery.relabel.logs_integrations_integrations_node_exporter_journal_scrape.rules

forward_to = [loki.write.grafana_cloud_loki.receiver]

}

local.file_match "logs_integrations_integrations_node_exporter_direct_scrape" {

path_targets = [{

__address__ = "localhost",

__path__ = "/var/log/{syslog,messages,*.log}",

instance = constants.hostname,

job = "integrations/node_exporter",

}]

}

discovery.relabel "logs_integrations_integrations_node_exporter_journal_scrape" {

targets = []

rule {

source_labels = ["__journal__systemd_unit"]

target_label = "unit"

}

rule {

source_labels = ["__journal__boot_id"]

target_label = "boot_id"

}

rule {

source_labels = ["__journal__transport"]

target_label = "transport"

}

rule {

source_labels = ["__journal_priority_keyword"]

target_label = "level"

}

}

loki.source.file "logs_integrations_integrations_node_exporter_direct_scrape" {

targets = local.file_match.logs_integrations_integrations_node_exporter_direct_scrape.targets

forward_to = [loki.write.grafana_cloud_loki.receiver]

}This integration uses the loki.source.journal, and local.file_match components to collect system logs.

This includes the systemd journal and the file(s) matching /var/log/{syslog,messages,*.log}.

If you wish to capture other log files, you must add new new maps to the path_targets list parameter of the local.file_match component. If you wish for these additionally captured logs to be labeled so that they can be seen in Linux Node integration logs dashboard, the entry must include the same instance and job labels.

Grafana Agent static configuration (deprecated)

The following section shows configuration for running Grafana Agent in static mode which is deprecated. You should use Grafana Alloy for all new deployments.

Before you begin with Grafana Agent static

Each Linux node being observed must have its dedicated Grafana Agent running.

If you want to monitor more than one Linux Node with this integration, we recommend you to use the Ansible collection for Grafana Cloud to deploy Grafana Agent to multiple machines, as described in this documentation.

Install Linux Server integration

- In your Grafana Cloud stack, click Connections in the left-hand menu.

- Find Linux Server and click its tile to open the integration.

- Review the prerequisites in the Configuration Details tab and set up Grafana Agent to send Linux Server metrics and logs to your Grafana Cloud instance.

- Click Install to add this integration’s pre-built dashboards and alerts to your Grafana Cloud instance, and you can start monitoring your Linux Server setup.

Post-install configuration for the Linux Server integration

This integration is configured to work with the node_exporter, which is embedded in the Grafana Agent.

Enable the integration by manually adding the provided snippets to your agent configuration file.

Note: The

instancelabel must uniquely identify the node being scraped. Also, ensure each deployed Grafana Agent has a configuration that matches the node it is deployed to.

This integration supports metrics and logs from Linux. If you want to monitor your Linux node logs, there are 3 options. You can:

- scrape the journal

- scrape your OS log files directly

- scrape both your journal and OS log files

We recommend that you enable journal scraping because it comes with a unit label that can be used to filter logs on the dashboards. Config snippets for both cases are provided.

If you want to show logs and metrics signals correlated in your dashboards, as a single pane of glass, ensure the following:

jobandinstancelabel values must match fornode_exporterintegration andlogsscrape config in your agent configuration file.joblabel must be set tointegrations/node_exporter(already configured in the snippets).instancelabel must be set to a value that uniquely identifies your Linux Node. Please replace the default<your-instance-name>value according to your environment - it should be set manually. Note that if you uselocalhostfor multiple nodes, the dashboards will not be able to filter correctly by instance.

For a full description of configuration options see how to configure the node_exporter_config block in the agent documentation.

Configuration snippets for Grafana Agent

Below integrations, insert the following lines and change the URLs according to your environment:

node_exporter:

enabled: true

# disable unused collectors

disable_collectors:

- ipvs #high cardinality on kubelet

- btrfs

- infiniband

- xfs

- zfs

# exclude dynamic interfaces

netclass_ignored_devices: "^(veth.*|cali.*|[a-f0-9]{15})$"

netdev_device_exclude: "^(veth.*|cali.*|[a-f0-9]{15})$"

# disable tmpfs

filesystem_fs_types_exclude: "^(autofs|binfmt_misc|bpf|cgroup2?|configfs|debugfs|devpts|devtmpfs|tmpfs|fusectl|hugetlbfs|iso9660|mqueue|nsfs|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|selinuxfs|squashfs|sysfs|tracefs)$"

# drop extensive scrape statistics

metric_relabel_configs:

- action: drop

regex: node_scrape_collector_.+

source_labels: [__name__]

relabel_configs:

- replacement: '<your-instance-name>'

target_label: instanceBelow logs.configs.scrape_configs, insert the following lines according to your environment.

- job_name: integrations/node_exporter_journal_scrape

journal:

max_age: 24h

labels:

instance: '<your-instance-name>'

job: integrations/node_exporter

relabel_configs:

- source_labels: ['__journal__systemd_unit']

target_label: 'unit'

- source_labels: ['__journal__boot_id']

target_label: 'boot_id'

- source_labels: ['__journal__transport']

target_label: 'transport'

- source_labels: ['__journal_priority_keyword']

target_label: 'level'

- job_name: integrations/node_exporter_direct_scrape

static_configs:

- targets:

- localhost

labels:

instance: '<your-instance-name>'

__path__: /var/log/{syslog,messages,*.log}

job: integrations/node_exporterFull example configuration for Grafana Agent

Refer to the following Grafana Agent configuration for a complete example that contains all the snippets used for the Linux Server integration. This example also includes metrics that are sent to monitor your Grafana Agent instance.

integrations:

prometheus_remote_write:

- basic_auth:

password: <your_prom_pass>

username: <your_prom_user>

url: <your_prom_url>

agent:

enabled: true

relabel_configs:

- action: replace

source_labels:

- agent_hostname

target_label: instance

- action: replace

target_label: job

replacement: "integrations/agent-check"

metric_relabel_configs:

- action: keep

regex: (prometheus_target_sync_length_seconds_sum|prometheus_target_scrapes_.*|prometheus_target_interval.*|prometheus_sd_discovered_targets|agent_build.*|agent_wal_samples_appended_total|process_start_time_seconds)

source_labels:

- __name__

# Add here any snippet that belongs to the `integrations` section.

# For a correct indentation, paste snippets copied from Grafana Cloud at the beginning of the line.

node_exporter:

enabled: true

# disable unused collectors

disable_collectors:

- ipvs #high cardinality on kubelet

- btrfs

- infiniband

- xfs

- zfs

# exclude dynamic interfaces

netclass_ignored_devices: "^(veth.*|cali.*|[a-f0-9]{15})$"

netdev_device_exclude: "^(veth.*|cali.*|[a-f0-9]{15})$"

# disable tmpfs

filesystem_fs_types_exclude: "^(autofs|binfmt_misc|bpf|cgroup2?|configfs|debugfs|devpts|devtmpfs|tmpfs|fusectl|hugetlbfs|iso9660|mqueue|nsfs|overlay|proc|procfs|pstore|rpc_pipefs|securityfs|selinuxfs|squashfs|sysfs|tracefs)$"

# drop extensive scrape statistics

metric_relabel_configs:

- action: drop

regex: node_scrape_collector_.+

source_labels: [__name__]

relabel_configs:

- replacement: '<your-instance-name>'

target_label: instance

logs:

configs:

- clients:

- basic_auth:

password: <your_loki_pass>

username: <your_loki_user>

url: <your_loki_url>

name: integrations

positions:

filename: /tmp/positions.yaml

scrape_configs:

# Add here any snippet that belongs to the `logs.configs.scrape_configs` section.

# For a correct indentation, paste snippets copied from Grafana Cloud at the beginning of the line.

- job_name: integrations/node_exporter_journal_scrape

journal:

max_age: 24h

labels:

instance: '<your-instance-name>'

job: integrations/node_exporter

relabel_configs:

- source_labels: ['__journal__systemd_unit']

target_label: 'unit'

- source_labels: ['__journal__boot_id']

target_label: 'boot_id'

- source_labels: ['__journal__transport']

target_label: 'transport'

- source_labels: ['__journal_priority_keyword']

target_label: 'level'

- job_name: integrations/node_exporter_direct_scrape

static_configs:

- targets:

- localhost

labels:

instance: '<your-instance-name>'

__path__: /var/log/{syslog,messages,*.log}

job: integrations/node_exporter

metrics:

configs:

- name: integrations

remote_write:

- basic_auth:

password: <your_prom_pass>

username: <your_prom_user>

url: <your_prom_url>

scrape_configs:

# Add here any snippet that belongs to the `metrics.configs.scrape_configs` section.

# For a correct indentation, paste snippets copied from Grafana Cloud at the beginning of the line.

global:

scrape_interval: 60s

wal_directory: /tmp/grafana-agent-walDashboards

The Linux Server integration installs the following dashboards in your Grafana Cloud instance to help monitor your system.

- Linux node / CPU and system

- Linux node / filesystem and disks

- Linux node / fleet overview

- Linux node / logs

- Linux node / memory

- Linux node / network

- Linux node / overview

Node overview dashboard

Fleet overview dashboard

Drill down dashboards: Network interfaces

Alerts

The Linux Server integration includes the following useful alerts:

node-exporter-filesystem

| Alert | Description |

|---|---|

| NodeFilesystemAlmostOutOfSpace | Warning: Filesystem has less than 5% space left. |

| NodeFilesystemAlmostOutOfSpace | Critical: Filesystem has less than 3% space left. |

| NodeFilesystemFilesFillingUp | Warning: Filesystem is predicted to run out of inodes within the next 24 hours. |

| NodeFilesystemFilesFillingUp | Critical: Filesystem is predicted to run out of inodes within the next 4 hours. |

| NodeFilesystemAlmostOutOfFiles | Warning: Filesystem has less than 5% inodes left. |

| NodeFilesystemAlmostOutOfFiles | Critical: Filesystem has less than 3% inodes left. |

node-exporter

| Alert | Description |

|---|---|

| NodeCPUHighUsage | Info: High CPU usage. |

| NodeClockNotSynchronising | Warning: Clock not synchronising. |

| NodeClockSkewDetected | Warning: Clock skew detected. |

| NodeDiskIOSaturation | Warning: Disk IO queue is high. |

| NodeFileDescriptorLimit | Warning: Kernel is predicted to exhaust file descriptors limit soon. |

| NodeHasRebooted | Info: Node has rebooted. |

| NodeHighNumberConntrackEntriesUsed | Warning: Number of conntrack are getting close to the limit. |

| NodeMemoryHighUtilization | Warning: Host is running out of memory. |

| NodeMemoryMajorPagesFaults | Warning: Memory major page faults are occurring at very high rate. |

| NodeNetworkReceiveErrs | Warning: Network interface is reporting many receive errors. |

| NodeNetworkTransmitErrs | Warning: Network interface is reporting many transmit errors. |

| NodeProcessesCountIsHigh | Warning: There is more than 400 running processes on host. |

| NodeRAIDDegraded | Critical: RAID Array is degraded. |

| NodeRAIDDiskFailure | Warning: Failed device in RAID array. |

| NodeSystemSaturation | Warning: System saturated, load per core is very high. |

| NodeSystemdServiceCrashlooping | Warning: Systemd service keeps restaring, possibly crash looping. |

| NodeSystemdServiceFailed | Warning: Systemd service has entered failed state. |

| NodeTextFileCollectorScrapeError | Warning: Node Exporter text file collector failed to scrape. |

Metrics

The most important metrics provided by the Linux Server integration, which are used on the pre-built dashboards and Prometheus alerts, are as follows:

- node_arp_entries

- node_boot_time_seconds

- node_context_switches_total

- node_cpu_seconds_total

- node_disk_io_time_seconds_total

- node_disk_io_time_weighted_seconds_total

- node_disk_read_bytes_total

- node_disk_read_time_seconds_total

- node_disk_reads_completed_total

- node_disk_write_time_seconds_total

- node_disk_writes_completed_total

- node_disk_written_bytes_total

- node_filefd_allocated

- node_filefd_maximum

- node_filesystem_avail_bytes

- node_filesystem_device_error

- node_filesystem_files

- node_filesystem_files_free

- node_filesystem_readonly

- node_filesystem_size_bytes

- node_intr_total

- node_load1

- node_load15

- node_load5

- node_md_disks

- node_md_disks_required

- node_memory_Active_anon_bytes

- node_memory_Active_bytes

- node_memory_Active_file_bytes

- node_memory_AnonHugePages_bytes

- node_memory_AnonPages_bytes

- node_memory_Bounce_bytes

- node_memory_Buffers_bytes

- node_memory_Cached_bytes

- node_memory_CommitLimit_bytes

- node_memory_Committed_AS_bytes

- node_memory_DirectMap1G_bytes

- node_memory_DirectMap2M_bytes

- node_memory_DirectMap4k_bytes

- node_memory_Dirty_bytes

- node_memory_HugePages_Free

- node_memory_HugePages_Rsvd

- node_memory_HugePages_Surp

- node_memory_HugePages_Total

- node_memory_Hugepagesize_bytes

- node_memory_Inactive_anon_bytes

- node_memory_Inactive_bytes

- node_memory_Inactive_file_bytes

- node_memory_Mapped_bytes

- node_memory_MemAvailable_bytes

- node_memory_MemFree_bytes

- node_memory_MemTotal_bytes

- node_memory_SReclaimable_bytes

- node_memory_SUnreclaim_bytes

- node_memory_ShmemHugePages_bytes

- node_memory_ShmemPmdMapped_bytes

- node_memory_Shmem_bytes

- node_memory_Slab_bytes

- node_memory_SwapTotal_bytes

- node_memory_VmallocChunk_bytes

- node_memory_VmallocTotal_bytes

- node_memory_VmallocUsed_bytes

- node_memory_WritebackTmp_bytes

- node_memory_Writeback_bytes

- node_netstat_Icmp6_InErrors

- node_netstat_Icmp6_InMsgs

- node_netstat_Icmp6_OutMsgs

- node_netstat_Icmp_InErrors

- node_netstat_Icmp_InMsgs

- node_netstat_Icmp_OutMsgs

- node_netstat_IpExt_InOctets

- node_netstat_IpExt_OutOctets

- node_netstat_TcpExt_ListenDrops

- node_netstat_TcpExt_ListenOverflows

- node_netstat_TcpExt_TCPSynRetrans

- node_netstat_Tcp_InErrs

- node_netstat_Tcp_InSegs

- node_netstat_Tcp_OutRsts

- node_netstat_Tcp_OutSegs

- node_netstat_Tcp_RetransSegs

- node_netstat_Udp6_InDatagrams

- node_netstat_Udp6_InErrors

- node_netstat_Udp6_NoPorts

- node_netstat_Udp6_OutDatagrams

- node_netstat_Udp6_RcvbufErrors

- node_netstat_Udp6_SndbufErrors

- node_netstat_UdpLite_InErrors

- node_netstat_Udp_InDatagrams

- node_netstat_Udp_InErrors

- node_netstat_Udp_NoPorts

- node_netstat_Udp_OutDatagrams

- node_netstat_Udp_RcvbufErrors

- node_netstat_Udp_SndbufErrors

- node_network_carrier

- node_network_info

- node_network_mtu_bytes

- node_network_receive_bytes_total

- node_network_receive_compressed_total

- node_network_receive_drop_total

- node_network_receive_errs_total

- node_network_receive_fifo_total

- node_network_receive_multicast_total

- node_network_receive_packets_total

- node_network_speed_bytes

- node_network_transmit_bytes_total

- node_network_transmit_compressed_total

- node_network_transmit_drop_total

- node_network_transmit_errs_total

- node_network_transmit_fifo_total

- node_network_transmit_multicast_total

- node_network_transmit_packets_total

- node_network_transmit_queue_length

- node_network_up

- node_nf_conntrack_entries

- node_nf_conntrack_entries_limit

- node_os_info

- node_procs_running

- node_sockstat_FRAG6_inuse

- node_sockstat_FRAG_inuse

- node_sockstat_RAW6_inuse

- node_sockstat_RAW_inuse

- node_sockstat_TCP6_inuse

- node_sockstat_TCP_alloc

- node_sockstat_TCP_inuse

- node_sockstat_TCP_mem

- node_sockstat_TCP_mem_bytes

- node_sockstat_TCP_orphan

- node_sockstat_TCP_tw

- node_sockstat_UDP6_inuse

- node_sockstat_UDPLITE6_inuse

- node_sockstat_UDPLITE_inuse

- node_sockstat_UDP_inuse

- node_sockstat_UDP_mem

- node_sockstat_UDP_mem_bytes

- node_sockstat_sockets_used

- node_softnet_dropped_total

- node_softnet_processed_total

- node_softnet_times_squeezed_total

- node_systemd_service_restart_total

- node_systemd_unit_state

- node_textfile_scrape_error

- node_time_zone_offset_seconds

- node_timex_estimated_error_seconds

- node_timex_maxerror_seconds

- node_timex_offset_seconds

- node_timex_sync_status

- node_uname_info

- node_vmstat_oom_kill

- node_vmstat_pgfault

- node_vmstat_pgmajfault

- node_vmstat_pgpgin

- node_vmstat_pgpgout

- node_vmstat_pswpin

- node_vmstat_pswpout

- process_max_fds

- process_open_fds

- up

Changelog

# 1.5.2 - January 2025

* Fix status panel metrics data source

# 1.5.1 - January 2025

* Fix status panel logs query

# 1.5.0 - January 2025

* Update node mixin, add new alerts:

* NodeHasRebooted

* NodeProcessesCountIsHigh

* Fix logs dashboard not showing any logs if cluster label is missing.

# 1.4.2 - November 2024

* Update Log dashboard job selector to always have a selected option.

# 1.4.1 - November 2024

* Update status panel check queries.

# 1.4.0 - September 2024

* Add asserts support.

# 1.3.0 - June 2024

* Add new alert: NodeSystemdServiceCrashlooping

* Fix links in the fleet overview table.

# 1.2.3 - December 2023

* Accept `integrations/unix` for compatibility with default flow mode node_exporter job name.

# 1.2.2 - December 2023

* Fix issues with showing data on dashboards when `cluster` label has no value.

# 1.2.1 - December 2023

* Fix queries for memoryBuffers memoryCached metrics

* Update network traffic panels to show only interfaces that had traffic

* Update network errors/drops panels to show only values greater than 0.

# 1.2.0 - November 2023

* Dashboards prefixes are changed to 'Linux node/ '

* Add new Loki based annotations:

* Service failed

* Critical system event

* Session (ssh,console) opened/closed

* Apply panel changes, some examples:

* Use Sentence case in titles

* Memory TS panel: Show only 'Memory total' and 'Memory used' by default

* CPU usage TS panel: Use Blue-Yellow-Red color Schema

* Add OS and group labels(job, cluster) as columns in Fleet overview table

* NodeSystemSaturation alert severity is set to warning

* Attach integration status panel to fleet and logs dashboards.

# 1.1.2 - August 2023

* Add regex filter for logs datasource.

# 1.1.1 - July 2023

* New Filter Metrics option for configuring the Grafana Agent, which saves on metrics cost by dropping any metric not used by this integration. Beware that anything custom built using metrics that are not on the snippet will stop working.

# 1.1.0 - June 2023

* This update introduces generic logs dashboard 'Node Exporter / Node Logs'

* Drop log panels 'Node Overview' dashboard.

# 1.0.1 - June 2023

* This update includes the following, by updating to the latest mixin:

* Panel description typos have been fixed

* Incorrect data links in the "Node Fleet Overview" Dashboard now correctly include the dashboard selector.

# 1.0.0 - April 2023

* This update introduces 3-tier view of linux nodes:

* TOP: Fleet view: see group of your linux instances at once

* Overview of the specific node: see specific node at a glance

* Drill down: Set of dashboards for deep analysis using advanced metrics (Memory, CPU and System, Filesystem and Disk, Networking)

* Links and data links are provided for better navigation between views

* Update agent's filter config in docs, to reduce number of timeseries generated per node

* Metrics filter instructions to exclude dynamic network devices, temp filesystems and extended scrape statistics

* Remove USE dashboards

* Convert all graphs to timeseries panels

* Add information row

* New alerts

* Split alerts into two alert groups

* Annotations for events: Reboot, OOMkill, and 'Kernel update'.

# 0.0.8 - October 2022

* Update upstream node_exporter mixin

* Enable multicluster dashboards for use in kubernetes.

* Add direct log file scrape to the agent snippets.

# 0.0.7 - September 2022

* Remove source_address from relabel_configs.

# 0.0.6 - May 2022

* Reverse fsSpaceAvailableCriticalThreshold and fsSpaceAvailableWarningThreshold

* Update units for disk and networking panels.

# 0.0.5 - May 2022

* Update 'Disk Space Usage' panel to table format.

# 0.0.4 - April 2022

* Fixed alerts and recording rules by providing proper nodeSelector.

# 0.0.3 - February 2022

* Added logs support from Loki datasource.

# 0.0.2 - October 2021

* Update all rate queries to use `$__rate_interval`.

# 0.0.1 - June 2020

* Initial release.Cost

By connecting your Linux Server instance to Grafana Cloud, you might incur charges. To view information on the number of active series that your Grafana Cloud account uses for metrics included in each Cloud tier, see Active series and dpm usage and Cloud tier pricing.