Snowflake integration for Grafana Cloud

Snowflake is a cloud data platform that is designed to connect businesses globally, across any type or scale of data and many different workloads, and unlock seamless data collaboration. The Snowflake integration uses the Grafana Agent to collect metrics for monitoring a Snowflake account, including aspects such as credit usage, storage usage, and login success rates. Accompanying dashboards are provided to visualize these metrics.

This integration supports metrics provided by v0.0.1 of the Snowflake exporter, which is integrated into the Grafana Agent.

This integration includes 6 useful alerts and 2 pre-built dashboards to help monitor and visualize Snowflake metrics.

Before you begin

In order to scrape Snowflake metrics, you must use a user configured with the ACCOUNTADMIN role, or a custom role that has access to the SNOWFLAKE.ACCOUNT_USAGE schema.

See the Snowflake documentation for instructions on how to enable other roles to access the SNOWFLAKE.ACCOUNT_USAGE schema.

Install Snowflake integration for Grafana Cloud

- In your Grafana Cloud stack, click Connections in the left-hand menu.

- Find Snowflake and click its tile to open the integration.

- Review the prerequisites in the Configuration Details tab and set up Grafana Agent to send Snowflake metrics to your Grafana Cloud instance.

- Click Install to add this integration’s pre-built dashboards and alerts to your Grafana Cloud instance, and you can start monitoring your Snowflake setup.

Configuration snippets for Grafana Alloy

Simple mode

These snippets are configured to scrape a single Snowflake instance running locally with default ports.

First, manually copy and append the following snippets into your alloy configuration file.

Integrations snippets

prometheus.exporter.snowflake "integrations_snowflake" {

account_name = "SNOWFLAKE_ACCOUNT"

username = "SNOWFLAKE_USERNAME"

password = "SNOWFLAKE_PASSWORD"

warehouse = "SNOWFLAKE_WAREHOUSE"

}

discovery.relabel "integrations_snowflake" {

targets = prometheus.exporter.snowflake.integrations_snowflake.targets

rule {

target_label = "instance"

replacement = constants.hostname

}

rule {

target_label = "job"

replacement = "integrations/snowflake"

}

}

prometheus.scrape "integrations_snowflake" {

targets = discovery.relabel.integrations_snowflake.output

forward_to = [prometheus.remote_write.metrics_service.receiver]

job_name = "integrations/snowflake"

scrape_interval = "30m0s"

scrape_timeout = "1m0s"

}Advanced mode

The following snippets provide examples to guide you through the configuration process.

To instruct Grafana Alloy to scrape your Snowflake instances, manually copy and append the snippets to your alloy configuration file, then follow subsequent instructions.

Advanced integrations snippets

prometheus.exporter.snowflake "integrations_snowflake" {

account_name = "SNOWFLAKE_ACCOUNT"

username = "SNOWFLAKE_USERNAME"

password = "SNOWFLAKE_PASSWORD"

warehouse = "SNOWFLAKE_WAREHOUSE"

}

discovery.relabel "integrations_snowflake" {

targets = prometheus.exporter.snowflake.integrations_snowflake.targets

rule {

target_label = "instance"

replacement = constants.hostname

}

rule {

target_label = "job"

replacement = "integrations/snowflake"

}

}

prometheus.scrape "integrations_snowflake" {

targets = discovery.relabel.integrations_snowflake.output

forward_to = [prometheus.remote_write.metrics_service.receiver]

job_name = "integrations/snowflake"

scrape_interval = "30m0s"

scrape_timeout = "1m0s"

}This integrations uses the prometheus.exporter.snowflake component to generate metrics from a Snowflake instance.

You must provide account details and credentials to scrape snowflake, including the account_name (in the form of [organization]-[account], e.g. aaaaaaa-bb12345), username, some form of authentication, warehouse, and role if the user is not configured with the ACCOUNTADMIN role. For password authentication, include a password. For RSA key-pair authentication, a private_key_path is required and private_key_password is required for encrypted keys.

The optional flag exclude_deleted_tables = true will exclude tables that have been deleted when querying Snowflake, which can dramatically improve processing time for larger environments. As a result, panels tracking table failsafe and time travel data may under-report, as they will only show the amount of data deleted from active tables.

For the full array of configuration options, refer to the prometheus.exporter.snowflake component reference documentation.

This exporter must be linked with a discovery.relabel component to apply the necessary relabelings.

For each Snowflake instance to be monitored you must create a pair of these components.

Configure the following properties within each discovery.relabel component:

instancelabel:constants.hostnamesets theinstancelabel to your Grafana Alloy server hostname. If that is not suitable, change it to a value uniquely identifies this Snowflake instance.

You can then scrape them by including each discovery.relabel under targets within the prometheus.scrape component.

By default, the scrape_interval is set to 30 minutes due to Snowflake’s large metric bucket time frames, but this interval may be reduced if desired.

Grafana Agent static configuration (deprecated)

The following section shows configuration for running Grafana Agent in static mode which is deprecated. You should use Grafana Alloy for all new deployments.

Before you begin

In order to scrape Snowflake metrics, you must use a user configured with the ACCOUNTADMIN role, or a custom role that has access to the SNOWFLAKE.ACCOUNT_USAGE schema.

See the Snowflake documentation for instructions on how to enable other roles to access the SNOWFLAKE.ACCOUNT_USAGE schema.

Install Snowflake integration for Grafana Cloud

- In your Grafana Cloud stack, click Connections in the left-hand menu.

- Find Snowflake and click its tile to open the integration.

- Review the prerequisites in the Configuration Details tab and set up Grafana Agent to send Snowflake metrics to your Grafana Cloud instance.

- Click Install to add this integration’s pre-built dashboards and alerts to your Grafana Cloud instance, and you can start monitoring your Snowflake setup.

Post-install configuration for the Snowflake integration

This integration is configured to work with the snowflake-prometheus-exporter, which is embedded in Grafana Agent.

Enable the integration by adding the provided snippet to your agent configuration file.

You must provide account details and credentials to scrape snowflake, including the account_name (in the form of [organization]-[account], e.g. aaaaaaa-bb12345), username, some form of authentication, warehouse, and role if the user is not configured with the ACCOUNTADMIN role. For password authentication, include a password. For RSA key-pair authentication, a private_key_path is required and private_key_password is required for encrypted keys.

By default, the scrape_interval is set to 30 minutes due to Snowflake’s large metric bucket time frames, but this interval may be reduced if desired.

For a full description of configuration options see how to configure the snowflake block in the agent documentation.

Configuration snippets for Grafana Agent

Below integrations, insert the following lines and change the URLs according to your environment:

snowflake:

enabled: true

scrape_interval: 30m

scrape_timeout: 1m

scrape_integration: true

account_name: "SNOWFLAKE_ACCOUNT"

username: "SNOWFLAKE_USERNAME"

password: "SNOWFLAKE_PASSWORD"

warehouse: "SNOWFLAKE_WAREHOUSE"

role: "ACCOUNTADMIN"

relabel_configs:

- replacement: '<your-instance-name>'

target_label: instanceFull example configuration for Grafana Agent

Refer to the following Grafana Agent configuration for a complete example that contains all the snippets used for the Snowflake integration. This example also includes metrics that are sent to monitor your Grafana Agent instance.

integrations:

prometheus_remote_write:

- basic_auth:

password: <your_prom_pass>

username: <your_prom_user>

url: <your_prom_url>

agent:

enabled: true

relabel_configs:

- action: replace

source_labels:

- agent_hostname

target_label: instance

- action: replace

target_label: job

replacement: "integrations/agent-check"

metric_relabel_configs:

- action: keep

regex: (prometheus_target_sync_length_seconds_sum|prometheus_target_scrapes_.*|prometheus_target_interval.*|prometheus_sd_discovered_targets|agent_build.*|agent_wal_samples_appended_total|process_start_time_seconds)

source_labels:

- __name__

# Add here any snippet that belongs to the `integrations` section.

# For a correct indentation, paste snippets copied from Grafana Cloud at the beginning of the line.

snowflake:

enabled: true

scrape_interval: 30m

scrape_timeout: 1m

scrape_integration: true

account_name: "SNOWFLAKE_ACCOUNT"

username: "SNOWFLAKE_USERNAME"

password: "SNOWFLAKE_PASSWORD"

warehouse: "SNOWFLAKE_WAREHOUSE"

role: "ACCOUNTADMIN"

relabel_configs:

- replacement: '<your-instance-name>'

target_label: instance

logs:

configs:

- clients:

- basic_auth:

password: <your_loki_pass>

username: <your_loki_user>

url: <your_loki_url>

name: integrations

positions:

filename: /tmp/positions.yaml

scrape_configs:

# Add here any snippet that belongs to the `logs.configs.scrape_configs` section.

# For a correct indentation, paste snippets copied from Grafana Cloud at the beginning of the line.

metrics:

configs:

- name: integrations

remote_write:

- basic_auth:

password: <your_prom_pass>

username: <your_prom_user>

url: <your_prom_url>

scrape_configs:

# Add here any snippet that belongs to the `metrics.configs.scrape_configs` section.

# For a correct indentation, paste snippets copied from Grafana Cloud at the beginning of the line.

global:

scrape_interval: 60s

wal_directory: /tmp/grafana-agent-walDashboards

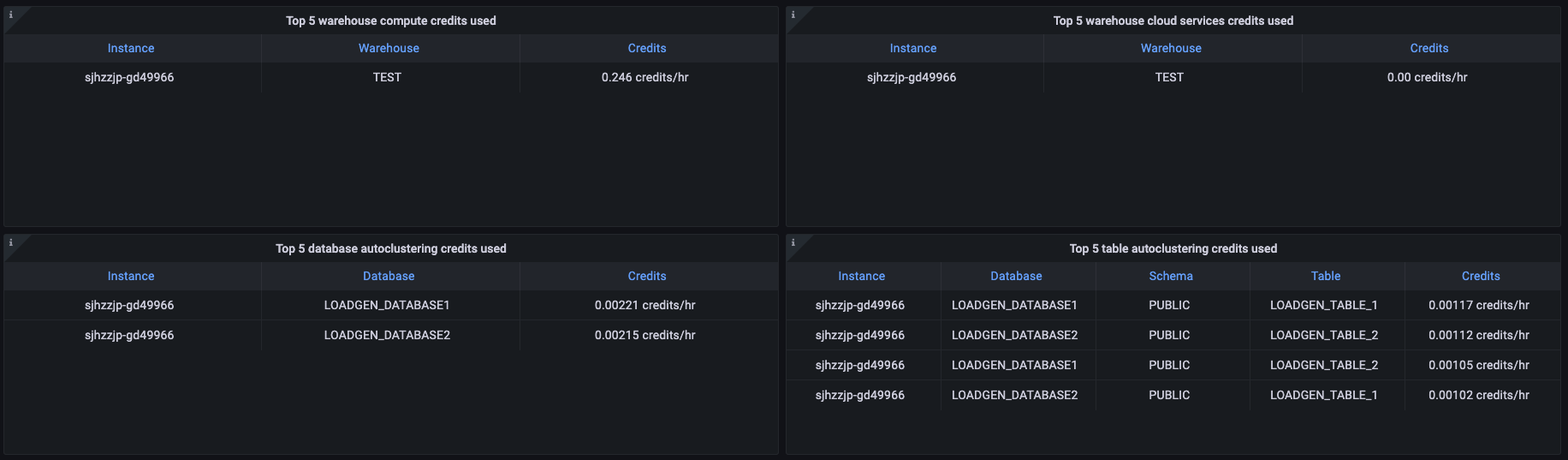

The Snowflake integration installs the following dashboards in your Grafana Cloud instance to help monitor your system.

- Snowflake data ownership

- Snowflake overview

Snowflake overview dashboard (1/3).

Snowflake overview dashboard (2/3).

Snowflake overview dashboard (3/3).

Alerts

The Snowflake integration includes the following useful alerts:

| Alert | Description |

|---|---|

| SnowflakeWarnHighLoginFailures | Warning: Large login failure rate. |

| SnowflakeWarnHighComputeCreditUsage | Warning: Compute credit usage is within 20% of the configured limit. |

| SnowflakeCriticalHighComputeCreditUsage | Critical: Compute credit usage is over the configured limit. |

| SnowflakeWarnHighServiceCreditUsage | Warning: Cloud services credit usage is within 20% of the configured limit. |

| SnowflakeCriticalHighServiceCreditUsage | Critical: Compute credit usage is over the configured limit. |

| SnowflakeDown | Warning: Snowflake exporter failed to scrape. |

Metrics

The most important metrics provided by the Snowflake integration, which are used on the pre-built dashboards and Prometheus alerts, are as follows:

- snowflake_auto_clustering_credits

- snowflake_failed_login_rate

- snowflake_failsafe_bytes

- snowflake_login_rate

- snowflake_stage_bytes

- snowflake_storage_bytes

- snowflake_successful_login_rate

- snowflake_table_active_bytes

- snowflake_table_clone_bytes

- snowflake_table_deleted_tables

- snowflake_table_failsafe_bytes

- snowflake_table_time_travel_bytes

- snowflake_up

- snowflake_used_cloud_services_credits

- snowflake_used_compute_credits

- snowflake_warehouse_blocked_queries

- snowflake_warehouse_executed_queries

- snowflake_warehouse_overloaded_queue_size

- snowflake_warehouse_provisioning_queue_size

- snowflake_warehouse_used_cloud_service_credits

- snowflake_warehouse_used_compute_credits

- up

Changelog

# 1.0.1 - January 2025

* Update integration instructions to provide detail on configuring optional RSA key-pair authentication in the Snowflake exporter

# 1.0.0 - October 2024

* Adds a panel to track deleted tables

* Updates documentation for new exporter option to exclude deleted tables

* Bump version to 1.0.0

# 0.0.2 - September 2023

- New Filter Metrics option for configuring the Grafana Agent, which saves on metrics cost by dropping any metric not used by this integration. Beware that anything custom built using metrics that are not on the snippet will stop working.

- New hostname relabel option, which applies the instance name you write on the text box to the Grafana Agent configuration snippets, making it easier and less error prone to configure this mandatory label.

# 0.0.1 - January 2023

- Initial releaseCost

By connecting your Snowflake instance to Grafana Cloud, you might incur charges. To view information on the number of active series that your Grafana Cloud account uses for metrics included in each Cloud tier, see Active series and dpm usage and Cloud tier pricing.