Loki tutorial: How to send logs from EKS with Promtail to get full visibility in Grafana

Amazon Elastic Kubernetes Service (Amazon EKS) is the fully managed Kubernetes service on AWS. If you’re using it and wondering how to query all your logs in one place, Loki is the answer. With this tutorial, you’ll learn how to set up Promtail on EKS to get full visibility into your cluster logs while using Grafana. We’ll start by forwarding pods logs then nodes services and finally Kubernetes events.

Interested in setting up the Promtail agent on AWS EC2 to find and analyze your logs? Check out my previous tutorial.

Want to learn even more about Loki? I hope you’ll join us today for our Logging with Loki: Essential configuration settings webinar. Sign up to attend live or to receive a link to watch the recording on demand another time.

Requirements

Before we start you’ll need:

- The AWS CLI configured (run

aws configure). - kubectl and eksctl installed.

- A Grafana instance with a Loki data source already configured.

For the sake of simplicity we’ll use a Grafana Cloud Loki and Grafana instance (get a free 30-day trial of Grafana Cloud Loki here), but all the steps are the same if you’re running your own Loki and Grafana instance.

Setting up the cluster

In this tutorial we’ll use eksctl, a simple command line utility for creating and managing Kubernetes clusters on Amazon EKS. AWS requires creating many resources such as IAM roles, security groups and networks, and by using eksctl, all of this is simplified.

We’re not going to use a Fargate cluster for this. If you want to use Fargate, daemonset are not allowed. The only way to ship logs with EKS Fargate is to run a fluentd, a fluentbit, or Promtail as a sidecar, and tee your logs into a file. For information on how to do that, check out this blog post.

Let’s run our first command:

eksctl create cluster --name loki-promtail --managedFeel free to go grab a coffee – this usually takes 15 minutes. When it’s finished, you should have kubectl context configured to communicate with your newly created cluster.

Let’s verify everything is fine:

kubectl version

Client Version: version.Info{Major:"1", Minor:"18", GitVersion:"v1.18.5", GitCommit:"e6503f8d8f769ace2f338794c914a96fc335df0f", GitTreeState:"clean", BuildDate:"2020-07-04T15:01:15Z", GoVersion:"go1.14.4", Compiler:"gc", Platform:"darwin/amd64"}

Server Version: version.Info{Major:"1", Minor:"16+", GitVersion:"v1.16.8-eks-fd1ea7", GitCommit:"fd1ea7c64d0e3ccbf04b124431c659f65330562a", GitTreeState:"clean", BuildDate:"2020-05-28T19:06:00Z", GoVersion:"go1.13.8", Compiler:"gc", Platform:"linux/amd64"}Adding Promtail DaemonSet

To ship all your pods logs, we’re going to set up Promtail as a DaemonSet in our cluster. This means it will run on each node of the cluster. We’ll then configure it to find the logs of your containers on the host.

What’s nice about Promtail is that it uses the same service discovery as Prometheus. Make sure the scrape_configs of Promtail matches the Prometheus one. Not only this is simpler to configure, but this also means Metrics and Logs will have the same metadata (labels) attached by the Prometheus service discovery. Then, when querying Grafana, you’ll be able to correlate metrics and logs very quickly. (Read more about that here.

We’re going to use helm 3, but if you want to use helm 2 it’s also fine. Just make sure you’ve properly installed tiller.

Let’s add the Loki repository and list all available charts.

helm repo add loki https://grafana.github.io/loki/charts

"loki" has been added to your repositories

helm search repo

NAME CHART VERSION APP VERSION DESCRIPTION

loki/fluent-bit 0.1.4 v1.5.0 Uses fluent-bit Loki go plugin for gathering lo...

loki/loki 0.30.1 v1.5.0 Loki: like Prometheus, but for logs.

loki/loki-stack 0.38.1 v1.5.0 Loki: like Prometheus, but for logs.

loki/promtail 0.23.2 v1.5.0 Responsible for gathering logs and sending them...If you want to install Loki, Grafana, Prometheus and Promtail all together, you can use the loki-stack chart. For now, we’ll focus on Promtail. Let’s create a new helm value file. We’ll fetch the default one and work from there:

curl https://raw.githubusercontent.com/grafana/loki/master/production/helm/promtail/values.yaml > values.yamlFirst we’re going to tell Promtail to send logs to our Loki instance. The example below shows how to send logs to GrafanaCloud. (Replace your credentials.) The default value will send to your own Loki and Grafana instance if you’re using the loki-chart repository.

loki:

serviceName: "logs-prod-us-central1.grafana.net"

servicePort: 443

serviceScheme: https

user: <userid>

password: <grafancloud apikey>Once you’re ready let’s create a new namespace monitoring and add Promtail to it:

kubectl create namespace monitoring

namespace/monitoring created

helm install promtail --namespace monitoring loki/promtail -f values.yaml

NAME: promtail

LAST DEPLOYED: Fri Jul 10 14:41:37 2020

NAMESPACE: default

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

Verify the application is working by running these commands:

kubectl --namespace default port-forward daemonset/promtail 3101

curl http://127.0.0.1:3101/metricsVerify that promtail pods are running. You should see only two since we’re running a two-nodes cluster.

kubectl get -n monitoring pods

NAME READY STATUS RESTARTS AGE

promtail-87t62 1/1 Running 0 35s

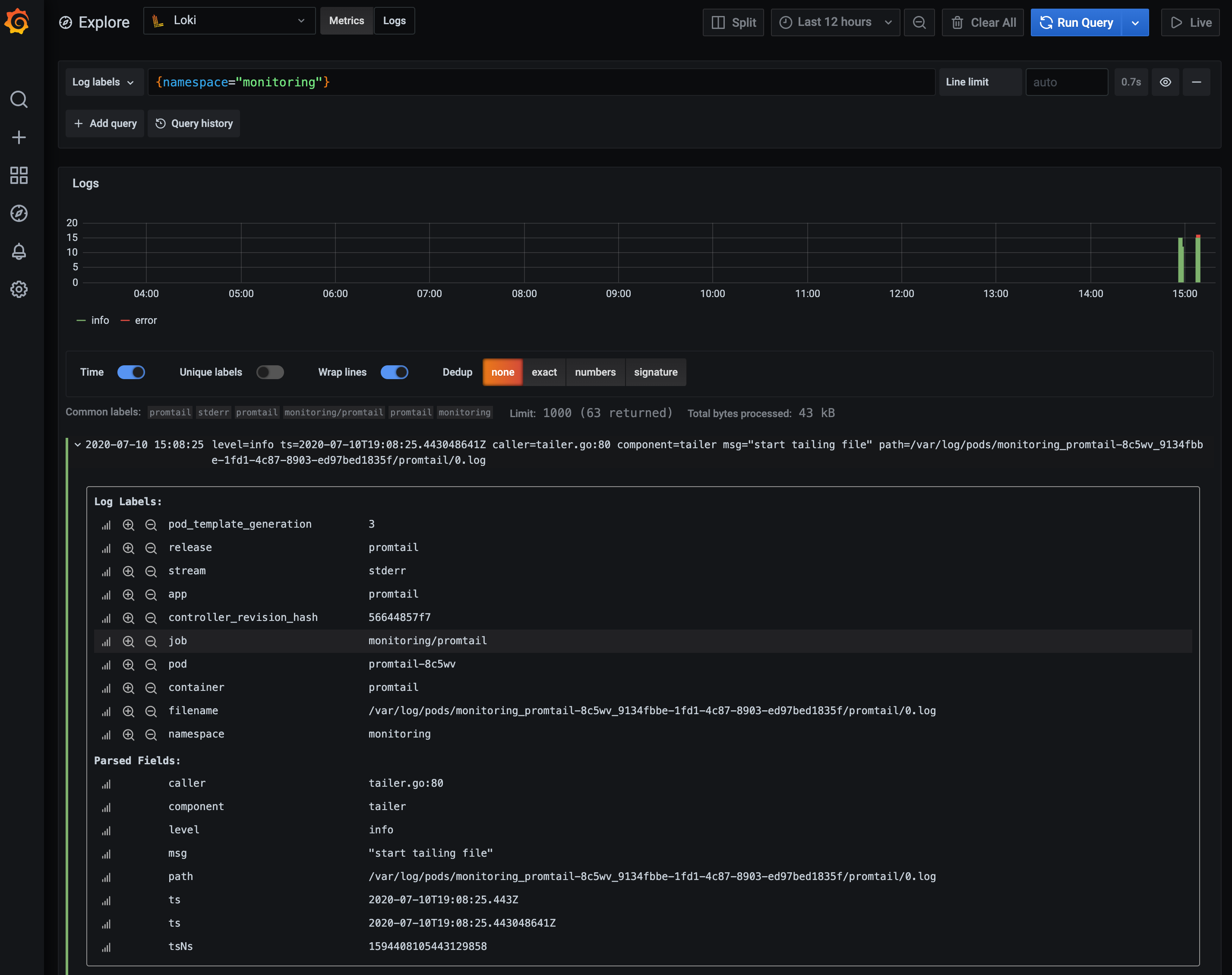

promtail-8c2r4 1/1 Running 0 35sYou can reach your Grafana instance and start exploring your logs. For example, if you want to see all logs in the monitoring namespace use {namespace="monitoring"}. You can also expand a single log line to discover all labels available from the Kubernetes service discovery.

Fetching kubelet logs with systemd

So far, we’ve been scraping logs from containers, but if you want to get more visibility, you could also scrape systemd logs from each of your machines. This means you can also get access to kubelet logs.

Let’s edit our values file again and extraScrapeConfigs to add the systemd job:

extraScrapeConfigs:

- job_name: journal

journal:

path: /var/log/journal

max_age: 12h

labels:

job: systemd-journal

relabel_configs:

- source_labels: ['__journal__systemd_unit']

target_label: 'unit'

- source_labels: ['__journal__hostname']

target_label: 'hostname'Feel free to change the relabel_configs to match what you would use in your own environment.

Now we need to add a volume for accessing systemd logs:

extraVolumes:

- name: journal

hostPath:

path: /var/log/journalAnd add a new volume mount in Promtail:

extraVolumeMounts:

- name: journal

mountPath: /var/log/journal

readOnly: trueNow that we’re ready, we can update the promtail deployment:

helm upgrade promtail loki/promtail -n monitoring -f values.yamlLet’s go back to Grafana and type in the query below to fetch all logs related to Volume from Kubelet:

{unit="kubelet.service"} |= "Volume"Filters expressions are powerful in LogQL – they help you scan through your logs. In this case, it will filter out all your kubelet logs not having the Volume word in it.

The workflow is simple: You always select a set of labels matchers first. This way, you reduce the data you’re planning to scan (such as an application, a namespace or even a cluster). Then, you can apply a set of Filters to find the logs you want.

Promtail also support syslog.

Adding Kubernetes events

Kubernetes Events (kubectl get events -n monitoring) are a great way to debug and troubleshoot your kubernetes cluster. Events contains information such as Node reboot, OOMKiller and Pod failures.

We’ll deploy the eventrouter application created by Heptio which logs those events to stdout.

But first, we need to configure Promtail. We want to parse the namespace to add it as a label from the content, in order to quickly access events by namespace.

Let’s update our pipelineStages to parse logs from the eventrouter:

pipelineStages:

- docker:

- match:

selector: '{app="eventrouter"}'

stages:

- json:

expressions:

namespace: event.metadata.namespace

- labels:

namespace: ""Pipeline stages are great ways to parse log content and create labels (which are indexed). If you want to configure more of them, check out the documentation.

Now, update Promtail again:

helm upgrade promtail loki/promtail -n monitoring -f values.yamlAnd deploy the eventrouter using:

kubectl create -f https://raw.githubusercontent.com/grafana/loki/master/docs/clients/aws/eks/eventrouter.yaml

serviceaccount/eventrouter created

clusterrole.rbac.authorization.k8s.io/eventrouter created

clusterrolebinding.rbac.authorization.k8s.io/eventrouter created

configmap/eventrouter-cm created

deployment.apps/eventrouter createdLet’s go into Grafana Explore and query events for our new monitoring namespace using {app="eventrouter",namespace="monitoring"}.

For more information about the eventrouter, make sure to read this blog post by Goutham.

Conclusion

That’s it! You can download the final and complete values.yaml if necessary.

Your EKS cluster is now ready, all your current and future application logs will now be shipped to Loki with Promtail. You’ll also be able to Explore kubelet and Kubernetes events. Since we’ve used a DaemonSet, you’ll automatically grab all your node logs as you scale them.

If you want to push this further, check out Joe’s blog post on how to automatically create Grafana dashboard annotations with Loki when you deploy new Kubernetes applications.

And if you need to delete the EKS cluster simply run eksctl delete cluster --name loki-promtail.